Solving Formal Math Problems by Decomposition and Iterative Reflection

An agent-based framework that orchestrates interaction between a general-purpose LLM and the Lean 4 environment via reflective decomposition and iterative proof repair.

Read Paper →Method

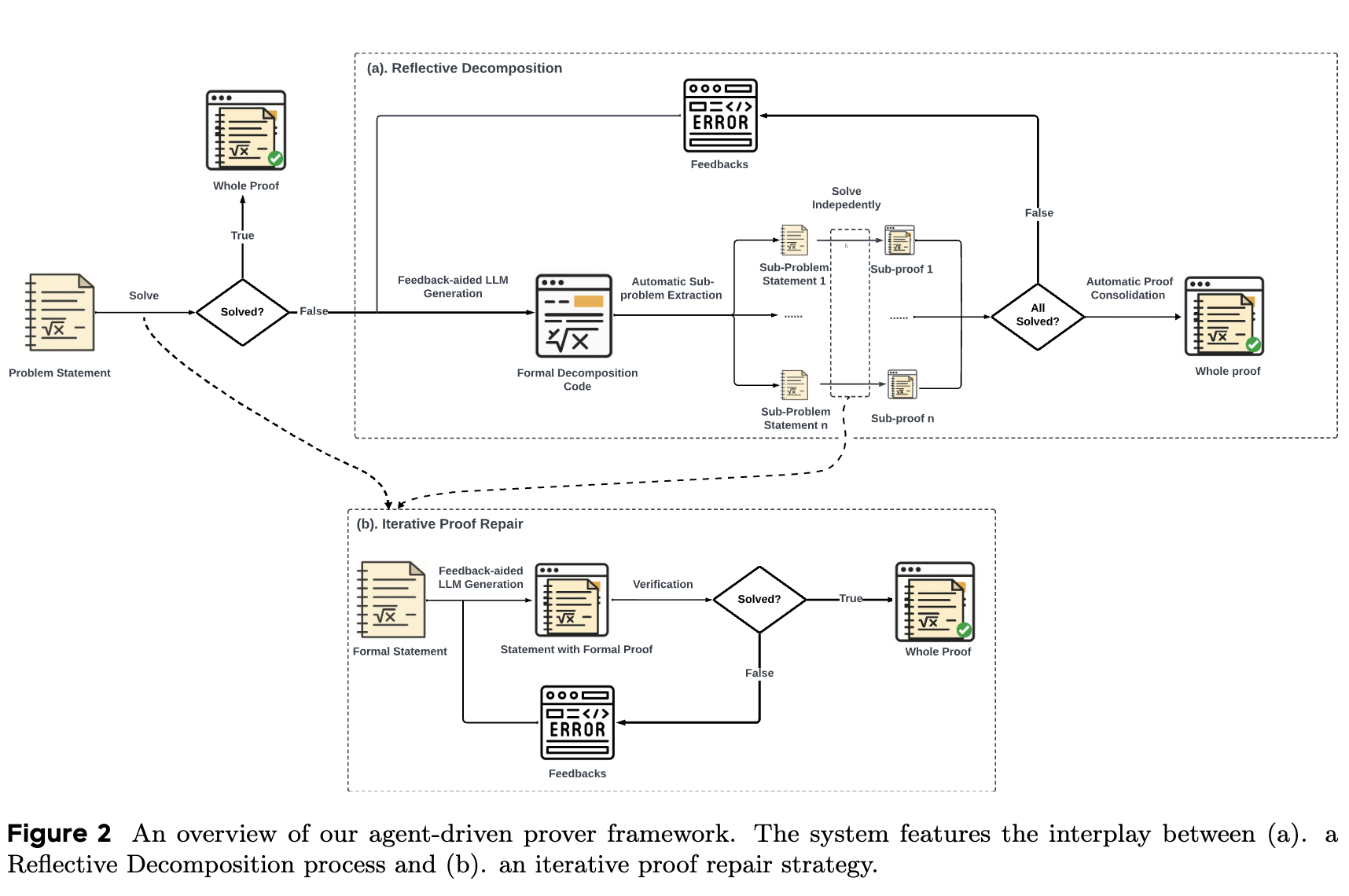

Delta Prover integrates a general-purpose Large Language Model (LLM) with the Lean 4 proof environment. The framework operates through an iterative loop consisting of two primary components: Iterative Proof Repair and Reflective Decomposition.

Let \(S\) denote a formal statement, \(s\) its corresponding natural language version, \(P\) a formal proof, \(p\) an informal proof plan, and \(D\) a formal proof sketch expressed in a Domain-Specific Language (DSL).

Iterative Proof Repair

The LLM is prompted with the formal statement \(S\) to generate an initial formal proof candidate \(P^0\). The prompt includes guidelines on:

- Formatting Conventions: Instructions on syntax following tactics (e.g.,

rcases,cases). - Effective Tactics: Doc-strings and examples for Mathlib tactics such as

linarith,ring, andomega. - Lean 4 Specification: Instructions to strictly output Lean 4 code instead of Lean 3.

If the statement-proof pair \((S, P^0)\) validates against the Lean 4 kernel, the process terminates. If a proof attempt \(P^i\) fails validation, the Lean 4 kernel returns an error message. For the next iteration, the prompt is augmented with:

- The incorrect proof \(P^i\).

- The tactic state and error message from the Lean 4 kernel.

- Retrieved theorems and definitions based on errored names or incorrect identifiers.

The LLM generates a revised proof \(P^{i+1}\). This cycle repeats until a valid proof is found or a predetermined maximum number of iterations is reached.

Reflective Decomposition

For proofs that require multiple steps, the framework breaks down the original problem into sub-problems.

- Initial Formal Sketch Construction: The LLM generates an informal proof plan \(p\) from \((S, s)\). The LLM then generates a formal proof sketch \(D\) using a custom DSL. The prompt includes DSL format guidelines and highlights common auto-formalization pitfalls.

- Sub-problem Extraction and Solving: The DSL parses the draft \(D\) and extracts formal statements for the required sub-proofs (\(S_1, \dots, S_n\)). Valid sub-problems are passed to the Iterative Proof Repair loop.

- Iterative Decomposition Repair: If any sub-problems are not solved by the Iterative Proof Repair loop, the failures are logged. The LLM is prompted to regenerate the proof sketch \(D\) with a revised decomposition strategy, using the list of unsolved sub-problems as feedback.

Overall Framework

Delta Prover initially attempts a direct solution using Iterative Proof Repair. If unsuccessful, it initiates Reflective Decomposition. If Reflective Decomposition yields a list of solved sub-problems, the framework performs Automatic Proof Consolidation. During consolidation, the derived sub-proofs \(P_i\) are substituted back into the DSL-based sketch, and concluding commands are appended to produce the complete formal proof \(P\) for the original statement \(S\).

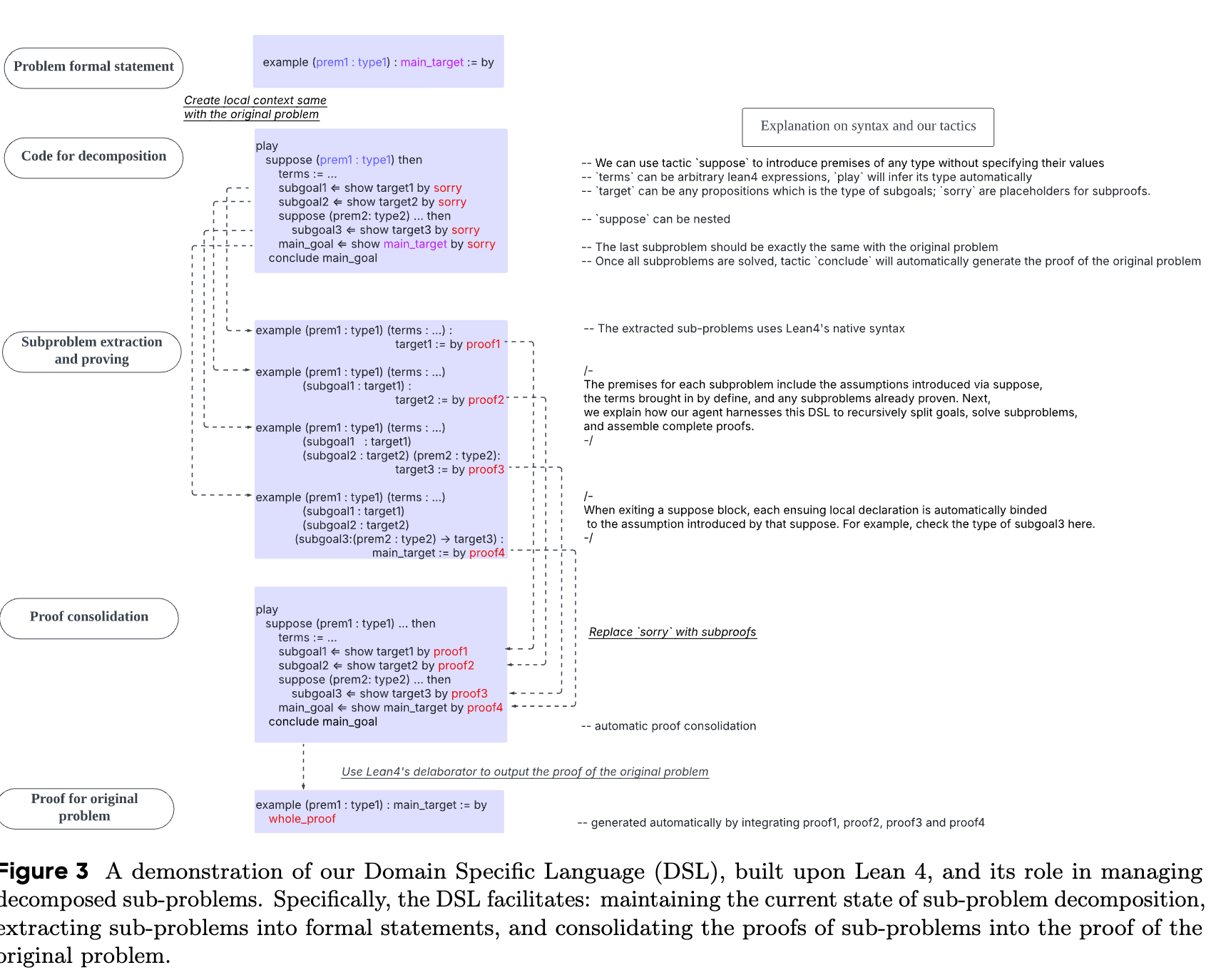

Decomposition via Extended Syntax

The custom Domain-Specific Language (DSL) is built on Lean 4’s metaprogramming facilities using a monad layer, PlayM, built upon TacticM. It manages intermediate proof states and converts them into valid Lean 4 proof scripts.

The DSL provides four tactics:

-

Suppose: Introduces new hypotheses into the proof context. -

Define: Introduces arbitrary Lean 4 expressions and infers their types. -

ShowBy: Creates subgoals and interfaces with Mathlib tactics. It records generated proofs for later assembly. -

Conclude: Consolidates proofs by leveraging recorded steps, dependency graphs, and Lean 4’s delaborator to emit a coherent Lean 4 proof.

Experiments

Basic Experiment Setup

- Model: Gemini 2.5 Pro 05-06 with sampling temperature set to \(1\).

- Benchmarks:

- miniF2F-test: 244 problems from mathematics competitions and the MATH dataset translated into Lean 4.

- miniF2F-test-IMO: A subset consisting of all IMO problems within miniF2F-test.

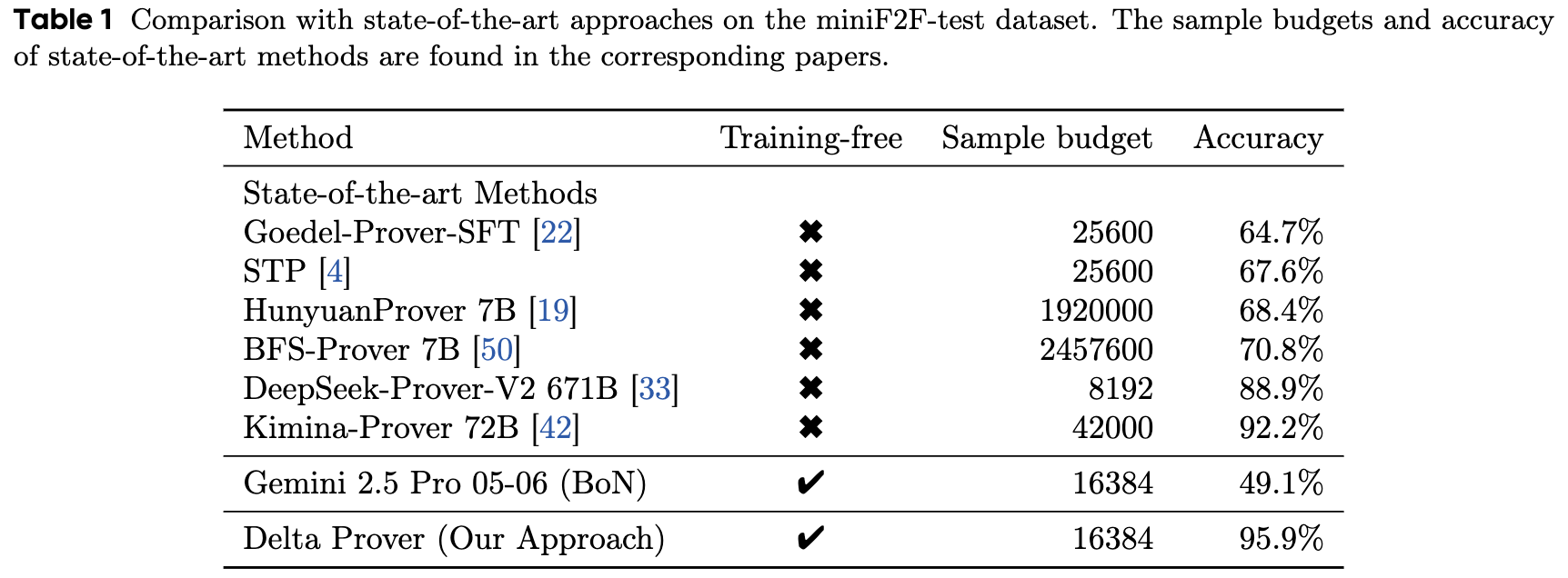

Benchmark Performance

Delta Prover is evaluated against baseline provers on the miniF2F-test benchmark. Sample budgets are determined by recording the number of API calls used for a successful attempt and taking the maximum across all solved problems.

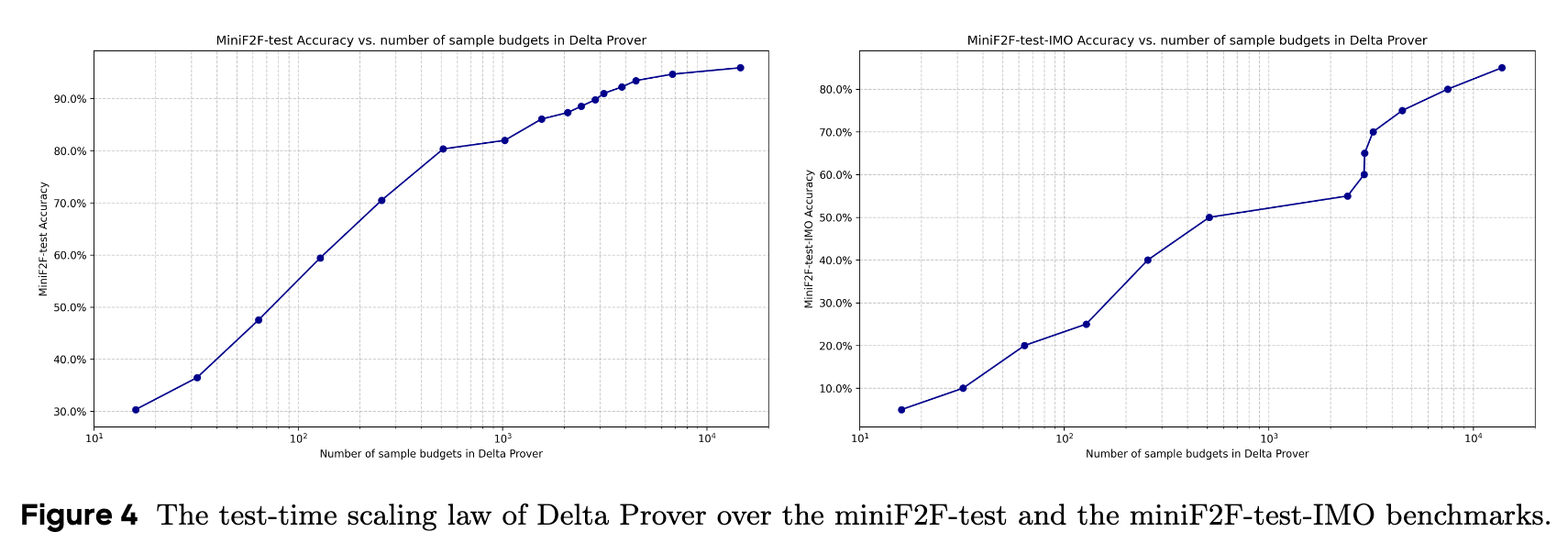

Delta Prover achieves an accuracy of 95.9% on miniF2F-test using a sample budget of 16384 API calls. The test-time scaling properties are tracked by measuring the accuracy relative to the available sample budget.

Ablation Studies

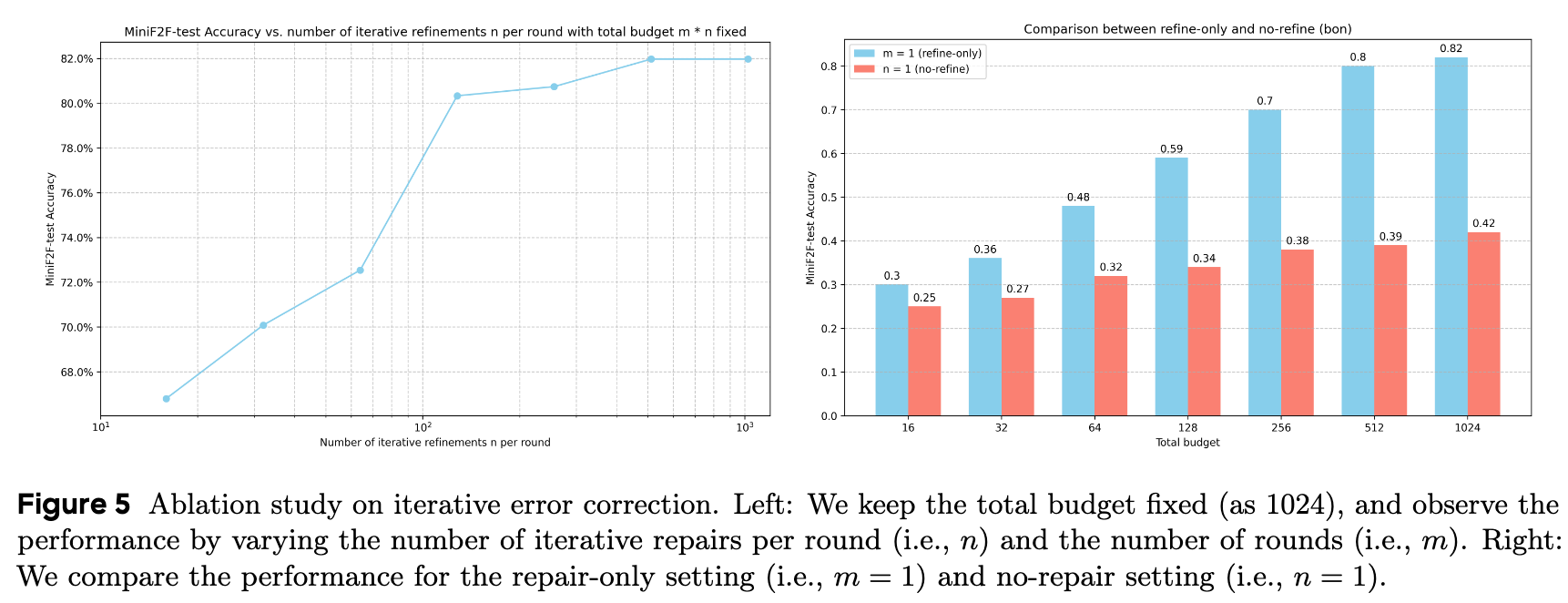

Effect of Iterative Proof Repair The impact of Iterative Proof Repair is tested using only proof construction (without Reflective Decomposition). The total budget is fixed at 1024 API calls. The experiment varies the number of iterative repairs per round (\(n\)) and the number of rounds (\(m\)).

Results indicate that accuracy increases as the number of repairs \(n\) increases for a fixed total budget \(m \times n\). When the number of repairs is constrained to \(n = 1\), the process is equivalent to a Best-of-N (BoN) sampling strategy. The repair-enabled configurations yield higher accuracy than the BoN configuration at equivalent computational budgets.

Effect of Reflective Decomposition The role of Reflective Decomposition is evaluated using IMO 2019 Problem 1 from the miniF2F-test set. Two strategies are compared:

- Baseline: Pure iterative proof correction.

- Delta Prover: Iterative proof correction combined with Reflective Decomposition.

The baseline approach did not find a solution after 1024 API calls. Using Reflective Decomposition, the problem was separated into 83 sub-problems during the initial decomposition round. Each sub-problem required an average of 4 API calls to resolve. The complete problem was solved using approximately \(83 \times 4 = 332\) API calls.