Active Learning for Decision Trees with Provable Guarantees

First polylogarithmic label complexity bounds for actively learning decision trees with multiplicative error guarantees

Read Paper →Published as a Conference Paper

Abstract

This paper advances the theoretical understanding of active learning label complexity for decision trees as binary classifiers. We provide the first analysis of the disagreement coefficient for decision trees and present the first general active learning algorithm that achieves a multiplicative error guarantee, producing a \((1 + \epsilon)\)-approximate classifier. By combining these results, we design an active learning algorithm for decision trees that uses only a polylogarithmic number of label queries in the dataset size.

Key Contributions

Multiplicative Error Algorithm

First active learning algorithm for classification that achieves a (1+ε)-multiplicative error guarantee, stronger than traditional additive models.

Decision Tree Label Complexity

First label complexity bound for active decision tree learning: polylogarithmic in dataset size under natural assumptions.

Disagreement Coefficient Analysis

First explicit bound on the disagreement coefficient for decision trees: θ = O(lnd(n)).

Necessity of Assumptions

Proved that both structural assumptions are necessary: relaxing either leads to polynomial label complexity.

Main Results

For a decision tree classification task over a dataset of n points where each node tests a distinct feature dimension and tree height is at most d, the disagreement coefficient satisfies:

$$\theta = O\!\left(\ln^d(n)\right)$$with a matching lower bound of Ω(c(ln(n) - c')d-1).

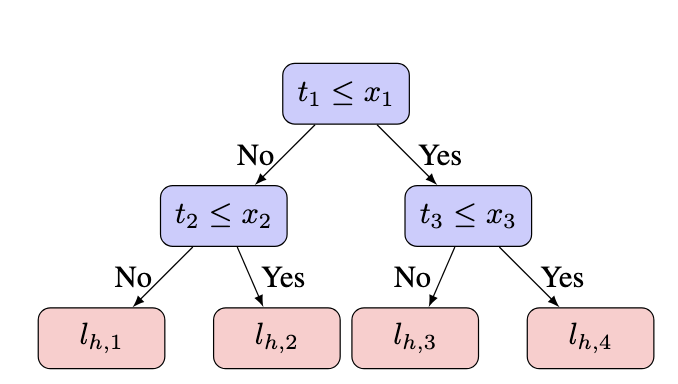

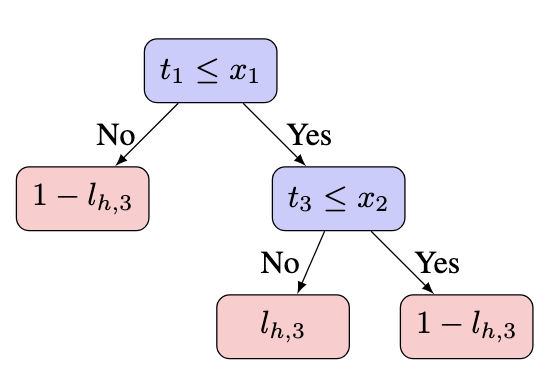

Proof approach: We decompose each decision tree into simpler components called LineTrees—classifiers that isolate a single leaf. By fixing a tree h and analyzing DIS(BH(h, r)), we break the disagreement region according to which leaf each point reaches and the dimension set of the corresponding leaf in a nearby tree h'. Using Lemma D.2, we reduce the analysis of full trees to line trees, and then apply a counting argument (Proposition 3.5) bounding the number of integer tuples whose product is at most nr, yielding the O(lnd(n)) bound.

Algorithm 2 returns a (1 + ε)-approximate classifier with probability > 1 - δ using:

$$O\!\left(\ln(n)\theta^2\!\left(V_H \ln \theta + \ln \frac{\ln n}{\delta}\right) + \frac{\theta^2}{\epsilon^2}\!\left(V_H \ln \frac{\theta}{\epsilon} + \ln \frac{1}{\delta}\right)\right)$$queries, where VH is the VC dimension and θ is the disagreement coefficient.

Proof approach: The algorithm iteratively prunes the hypothesis set by sampling from the disagreement region and eliminating classifiers whose error lower bound exceeds the best upper bound. The key insight is a two-regime argument: if the optimal error on the disagreement region is small (below 1/(16θ)), the hypothesis set radius must halve, so the loop progresses. If it is large, the algorithm enters a direct estimation phase where (1+ε)-approximation becomes easy because the high error provides a large denominator. The disagreement coefficient θ bridges hypothesis ball radius and disagreement region size, enabling this critical inference.

Combining the disagreement coefficient bound with the multiplicative error algorithm, the total number of label queries is:

$$O\!\left(\ln^{2d+2}(n)\!\left(2^d(d + \ln \text{dim})d + \ln \frac{1}{\delta}\right) + \frac{\ln^{2d}(n)}{\epsilon^2}\!\left(2^d(d + \text{dim}) \ln \frac{\ln^d(n)}{\epsilon} + \ln \frac{1}{\delta}\right)\right)$$which is polylogarithmic in n.

Proof approach: This follows directly by substituting the disagreement coefficient θ = O(lnd(n)) from Theorem 1.1 and the VC dimension VH = O(2d(d + ln dim)) of bounded-depth decision trees into the general label complexity bound of Theorem 1.2. Since θ is polylogarithmic in n, all terms in the resulting expression remain polylogarithmic in n, with the dependence on tree depth d and feature dimensionality dim appearing in the constant factors.

Why Multiplicative Error Matters

A key insight of this work is that additive error algorithms cannot be adapted for multiplicative settings. The natural idea of setting \(\epsilon_{\text{additive}} = \epsilon \cdot \eta\) (where \(\eta\) is the optimal error) fails because estimating \(\eta\) itself requires \(\Omega(1/\eta)\) labels, making the label complexity dependent on an unknown, potentially very small quantity.

Our approach is fundamentally different: the algorithm is agnostic to the magnitude of the optimal error. It exploits the fact that when the version space fails to shrink rapidly, this signals high error, which makes \((1+\epsilon)\)-approximation easier.

Algorithm Overview

Algorithm 1: Decision Stump (1D warmup)

Maintains an interval [L, R] of candidate stumps. Each iteration samples labels, bounds errors, and prunes provably suboptimal classifiers. If the interval fails to halve, the algorithm switches to a direct estimation phase using O(1/ε2) additional samples. This two-regime approach is the key innovation.

Algorithm 2: General Binary Classification

Generalizes the stump algorithm by replacing intervals with hypothesis sets Hi, sampling from the disagreement region DIS(Hi), and tracking progress via the radius of the hypothesis set. The disagreement coefficient θ bridges the gap between hypothesis ball radius and disagreement region size.

Structural Assumptions & Their Necessity

Required Unique dimensions per path: Each node on a root-to-leaf path must test a feature dimension distinct from its ancestors.

Required Grid-like data structure: Input data lies on a regular grid X = {(a1, ..., adim) | ai ∈ N, ai ≤ w}.

Proven necessary Without the unique dimension constraint, θ = Ω(n1/dim) — polynomial, not polylogarithmic.

Proven necessary Without grid structure (e.g., data on a line), θ = Ω(n) even with unique dimensions per path.

The uniformity assumption can be partially relaxed by assigning weights \(W_i \in [1, \lambda]\) to each data point, which scales the disagreement coefficient by at most \(\lambda^2\).

Lower Bound

Any active learning algorithm requires at least:

$$\Omega\!\left(\ln\!\left(\frac{1}{\delta}\right) \cdot \frac{1}{\epsilon^2}\right)$$queries to return a (1 + ε)-approximate decision stump with probability > 1 - δ. This shows our algorithm's dependence on ε is close to optimal (up to logarithmic factors).

Acknowledgments

This work is partially supported by DARPA expMath, ONR MURI 2024 award on Algorithms, Learning, and Game Theory, Army-Research Laboratory (ARL) grant W911NF2410052, NSF AF:Small grants 2218678, 2114269, 2347322.